There’s a moment every startup engineer knows too well.

It’s 11 PM. The product launch is tomorrow. Someone just merged a “small fix” and now three things are broken that weren’t broken before. The QA backlog is stacking up, the team is exhausted, and somewhere between the sprint planning and the release checklist, quality quietly slipped through the cracks.

This used to just be the cost of moving fast. In 2026, it doesn’t have to be.

AI-powered quality assurance has fundamentally changed how startups think about testing – not as a slow, painful bottleneck at the end of the pipeline, but as an intelligent, always-on system that catches problems before they reach your users. And for lean teams trying to ship fast without breaking things, that shift is nothing short of a game-changer.

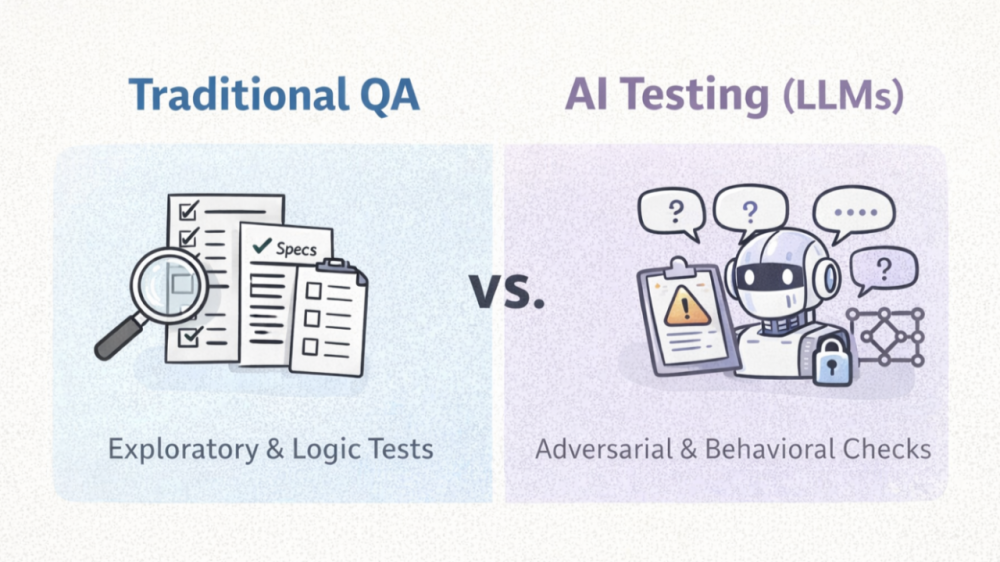

traditional QA and AI-powered QA

The Old QA Problem Startups Know Too Well

Let’s be honest about what traditional QA looked like for most early-stage teams.

You either had one overworked QA engineer manually running through test cases before every release, or you had developers writing tests in their spare time – which means almost never. Automated test suites existed, but they were brittle. The kind that break every time someone changes a button label, restructures a page layout, or adds a new feature. Maintaining them took almost as much effort as building the product itself.

The result? Startups faced a painful tradeoff: release fast and risk bugs reaching production, or slow down to test properly and fall behind. Neither option felt good. And most teams, under pressure to ship, chose speed – and quietly accepted that bugs were just part of the deal.

That tradeoff is disappearing. Fast.

What the Numbers Actually Say

Before diving into how AI QA works, it’s worth grounding this in reality. Because these aren’t just trends on a slide deck – they’re numbers that reflect a genuine shift in how software gets built and shipped.

| Metric | Stat | Source |

| Global software testing market (2024) | $55.8 billion | ThinkSys QA Trends 2026 |

| Projected market size by 2034 | $112.5 billion | ThinkSys QA Trends 2026 |

| Teams adopting AI-driven testing by 2026 | 80% | QA Trends Report 2026 |

| Organizations piloting generative AI in QA | 89% | World Quality Report 2025 |

| QA efficiency improvement with AI tools | 300–500% | Virtuoso QA |

| Reduction in test maintenance overhead | 60–80% | remote.qa |

| Cost reduction from AI in QA workflows | 50–70% | DeviQA |

| Regression cycle time reduction (Bloomberg) | 70% | Qadence AI |

These numbers paint a picture that’s hard to ignore. The industry isn’t just experimenting with AI in testing – it’s adopting it at scale, and the early results are compelling.

What AI-Powered QA Actually Means in 2026

“AI-powered QA” is one of those phrases that gets thrown around so often it starts to lose meaning. So let’s be specific about what it actually does – in plain, practical terms.

Self-Healing Test Automation

Traditional automated tests are fragile. They break when a UI element moves, when a button gets renamed, or when a new feature shifts the page layout. AI-powered testing tools now use machine learning to recognize what changed and automatically re-bind tests to the correct elements. Your CI/CD pipeline stays stable even as your product evolves rapidly.

For a startup pushing multiple releases a week, that alone saves hours of maintenance work every sprint.

AI Test Generation

Instead of engineers writing test cases from scratch, modern platforms can read your application’s behavior, interpret user flows, and generate comprehensive test scenarios automatically. Natural language interfaces mean even non-technical team members can describe a workflow in plain English and watch the system produce a test for it. What used to take 8–12 hours to write can now be done in minutes.

Predictive Defect Detection

This is where things get genuinely powerful. AI systems analyze patterns across code commits, test results, and production incidents to flag high-risk areas before testing even begins. Instead of running every test on every build, intelligent systems prioritize the areas most likely to break – helping small teams focus their energy where it actually matters.

Continuous Quality Feedback in CI/CD

Embedded directly into CI/CD pipelines, every time a developer pushes code, AI tools automatically run relevant tests, flag regressions, and surface actionable insights in real time. Quality stops being a stage at the end of development and becomes a constant signal throughout it.

Before AI QA vs. After AI QA: A Real Comparison

Here’s what the day-to-day reality looks like for a startup team, before and after adopting AI-powered QA.

| Area | Before AI QA | After AI QA |

| Test creation | Manual, time-consuming, requires technical skill | Auto-generated from user stories or natural language |

| Test maintenance | Constant manual updates when UI changes | Self-healing – adapts automatically to changes |

| Test coverage | Limited by team capacity and bandwidth | Broader coverage with AI-suggested edge cases |

| Release frequency | Slowed by QA backlog | Continuous releases with embedded quality checks |

| Bug detection | Often caught in production | Caught pre-deployment via predictive defect analysis |

| Team required | Dedicated QA engineer (or nobody) | Any team member can contribute with no-code tools |

| CI/CD integration | Manual trigger, inconsistent | Fully automated, runs on every commit |

| Cost | High (manual hours + rework) | Lower (automation handles repetitive work) |

This isn’t just a marginal upgrade. It’s a completely different relationship between your team and quality.

Why This Matters More for Startups Than Anyone Else

Enterprise companies have large QA teams, dedicated tooling budgets, and the luxury of slower release cycles. Startups have none of that.

What startups do have is pressure – pressure to ship features, respond to user feedback, and move faster than competitors who have ten times the headcount. In that environment, a QA bottleneck isn’t just annoying. It’s existential.

WHY AI MATTERS MOST FOR STARTUPS

Here’s the thing that often gets missed: bugs don’t just affect your users. They eat your engineering time. They slow down your roadmap. They erode trust – both with customers and with investors. IBM’s Systems Sciences Institute found that fixing a bug after a product release can cost four to five times more than catching it at the design stage. Let that sink in for a moment.

For a five-person team, the math is simple. Every hour spent debugging a production issue is an hour not spent building the next feature. Every regression that slips through is a support ticket, a churned user, or a refund request. AI QA doesn’t just help you move faster – it helps you stop bleeding time on the work that shouldn’t need to happen at all.

And here’s the really good news for startups specifically: no-code, natural language test writing powered by AI means you no longer need a highly technical QE team to maintain full coverage. That democratization – giving any team the ability to ship with confidence – is one of the most meaningful shifts in how software gets built right now.

The Top AI QA Tools for Startups in 2026

Not all AI testing tools are built for startups. Some are designed for large enterprises with complex legacy systems and teams of 50 QA engineers. Here’s an honest breakdown of the tools worth knowing about as a lean team.

| Tool | Best For | Key Feature | Startup-Friendly? | Starting Price |

| Testsigma | Full-stack testing, small to large teams | Natural language test authoring, self-healing, 90% maintenance reduction | Yes | Free tier; $99/month (Startup) |

| Mabl | Web apps, agile teams | Low-code E2E testing, visual regression, CI/CD integration | Yes | Contact for pricing |

| KaneAI (TestMu) | Fast-moving teams wanting NLP authoring | GenAI-native QA agent, multi-language code export | Yes | From $15/month |

| Bug0 Studio | Teams wanting E2E tests from recordings | Video/screen-to-test generation, auto-healing selectors | Yes | From $250/month |

| Katalon | Teams needing web + mobile + API coverage | AI-assisted test creation, generous free tier | Yes | Free tier available |

| QA Wolf | Teams that want zero QA involvement | Done-for-you service with human + AI coverage | Budget dependent | From $5K/month |

| Applitools | Visual UI validation | AI-powered screenshot comparison | Better for mid-size | $10K–$50K/year |

| Tricentis / Testim | Enterprise compliance environments | Smart locators, SAP/Salesforce support | Enterprise-only | $30K–$100K/year |

Quick guidance for startup teams:

- 1–5 engineers: Start with Katalon (free tier) or KaneAI ($15/month). Low friction, quick value.

- 5–20 engineers: Mabl or Testsigma. Strong autonomy, excellent CI/CD integrations, solid support.

- 20+ engineers or funded startup: Bug0 Studio or QA Wolf if you want coverage without the overhead.

The rule of thumb: validate self-healing capability on your own app before committing to any tool. Ask vendors to demo on your actual codebase, not their demo app.

comparing AI-assisted vs. Traditional testing

REF:- used claudeto generate using this prompt:-A simple bar chart or visual comparing the “time to write tests” (traditional vs. AI) and “maintenance overhead” (traditional vs. AI). Could also be a side-by-side icon-based visual. Keep it clean and data-forward. Canva has good free chart templates for this.

Real Patterns Emerging Across Startup Teams in 2026

The most successful startup engineering teams in 2026 aren’t just adopting AI testing tools – they’re rethinking how quality fits into their entire development culture. A few patterns stand out consistently.

Shift-left, for real this time. The “shift-left” movement has been talked about for years, but AI makes it actually practical. Testing now begins at the design stage – user stories and requirements are checked for gaps, unit and API tests are suggested early, and static analysis runs continuously during development. Bugs caught at the requirement stage cost a fraction of what they cost to fix in production.

QA without a full QA team. Hyper-automation and scriptless testing are now standard, not “next-gen.” With faster releases, complex apps, and limited QA headcount, teams need automation that doesn’t require deep coding skills. AI testing tools now let product managers and designers participate in quality assurance without writing a single line of code. That’s a real cultural shift – and a healthy one.

Smarter test prioritization. Not every test needs to run on every build. AI systems learn which parts of your codebase are most volatile, which user flows are highest risk, and which tests are most likely to surface real bugs – and they prioritize accordingly. Facebook’s research on predictive test selection demonstrated 50% reductions in CI pipeline run time with no meaningful increase in escaped defect rates. The same techniques are now accessible to startups through modern tooling.

AI QA maturity as a competitive signal. Increasingly, the question isn’t just “do you have automated tests?” – it’s “how intelligent is your QA infrastructure?” Teams that reach what practitioners call “Level 3–4” AI QA maturity (self-healing tests, AI-driven prioritization, integrated CI/CD reporting) are shipping faster, experiencing fewer production incidents, and building more confidence with investors and customers alike.

The AI QA Maturity Model: Where Does Your Team Sit?

This 5-level framework helps you benchmark where your team is today – and figure out what to work toward next.

| Level | Name | What It Looks Like | Goal |

| Level 1 | Manual | Exploratory testing only, no automation. Releases gated by manual regression runs. | Get a baseline automated suite |

| Level 2 | Scripted Automation | Basic automated tests exist but are brittle and high-maintenance. | Introduce self-healing frameworks |

| Level 3 | AI-Assisted | Self-healing tests + AI test generation + CI/CD integration. Partial coverage. | Expand coverage, add predictive triage |

| Level 4 | AI-Driven | Full AI QA pipeline. Predictive defect detection, smart test prioritization, autonomous maintenance. | Optimize and scale |

| Level 5 | Autonomous | Fully agentic QA. AI agents plan, write, execute, and maintain tests with minimal human input. | Research/hyperscaler territory – aspirational for most |

Most funded startups in 2026 should be targeting Level 3–4. It’s achievable within 3–6 months with the right tooling and approach.

A Realistic Path to Getting Started

Here’s the thing about AI QA adoption that most tool vendors won’t tell you: AI amplifies good practices, but it doesn’t fix broken ones. If your CI pipeline is unreliable or your test architecture is fragmented, adding AI on top won’t save you. Sort the foundation first.

With that said, here’s a practical 4-step path for startup teams:

Step 1: Audit your current test coverage. Before buying anything, understand what you have. What percentage of your core user journeys have automated coverage? Where are your biggest coverage gaps? What’s your defect escape rate from production?

Step 2: Start with self-healing selectors. If you already have Playwright or Cypress suites, migrating to a self-healing framework delivers immediate ROI with minimal disruption. This is the fastest win for most teams.

Step 3: Layer in AI test generation. Once your existing tests are stable, start using AI to generate new test cases – especially for edge cases and regression scenarios that your team hasn’t had time to cover.

Step 4: Embed it all in CI/CD. Make quality a signal that runs on every commit, not a checkpoint that happens before release. That’s when the real velocity gains show up.

roadmap

REF:-USED CLAUDE TO GENERATE PIC USING PROMPT :-A 4-step visual roadmap (horizontal or vertical) showing the onboarding journey: Audit → Self-Healing → AI Generation → CI/CD Integration. A simple numbered graphic with icons for each step works well. Keep it startup-friendly — not overly corporate.

The Agentic Era Is Already Here

If everything above sounds impressive, here’s the part that’s genuinely exciting.

As of 2026, the software development industry is in what practitioners are calling the Agentic Epoch – a phase where AI agents don’t just assist with testing, they autonomously plan it, execute it, and fix it. Following the explosive adoption of generative AI in 2024 and 2025, over $1.5 billion in venture capital has flowed into AI testing platforms, with 40+ startups competing across test generation, end-to-end automation, security testing, and performance validation.

This isn’t science fiction. Tools available today handle dynamic IDs, shadow DOM, complex layout changes, two-factor authentication, multi-role user workflows, and API contract testing – the exact edge cases that used to require expensive senior QA engineers to configure and maintain manually.

Where QA teams once wrote scripts and ran tests, they now define quality objectives, oversee AI-generated results, and ensure automated decisions align with business priorities. That’s a fundamentally different job – and honestly, a more interesting one. The repetitive work moves to the machine. The judgment-intensive work stays with the human. That’s a trade most engineers will happily make.

Quality and Speed Are No Longer Opposites

The biggest mindset shift AI-powered QA demands is this: quality is not the enemy of speed. It never was – but manual testing made it feel that way.

For years, startups treated testing as something you did when you had time – which meant almost never, or only when something broke badly enough to force it. That approach created technical debt, eroded user trust, and eventually slowed teams down far more than the testing ever would have.

In 2026, the startups shipping the fastest are also the ones taking quality the most seriously. They’re not doing it by hiring armies of testers. They’re doing it by letting AI handle the repetitive, the brittle, and the predictable – so their engineers can focus on the work that actually requires human judgment.

The question isn’t whether AI-powered QA is worth it. For most startup teams, the real question is: how much longer can you afford to ship without it?

Building something and figuring out your QA setup? Drop a comment below – happy to share what’s working for teams at different stages. And if you found this useful, feel free to share it with your engineering lead or CTO.